Search engine optimization, like any specialized industry, has its own unique set of terminology, definitions and abbreviations.

This SEO glossary contains over 200 of the most common terms that you are likely to hear and that you will definitely need to know throughout your SEO career.

| B | C | D | E | F | G | H | me | J | K | L | M | N | About | P | Q | R | S | T | U | V | In | X | Y

A

Contents

- 1 A

- 2 B

- 3 C

- 3.1 Cache

- 3.2 Cached Page

- 3.3 Canonical URL

- 3.4 ccTLD

- 3.5 Citation

- 3.6 Click Bait

- 3.7 Click Depth

- 3.8 Click-Through Rate (CTR)

- 3.9 Cloaking

- 3.10 CMS

- 3.11 Co-Citation

- 3.12 Code To Text Ratio

- 3.13 Competition

- 3.14 Content

- 3.15 Content is King”

- 3.16 Conversion

- 3.17 Conversion Rate

- 3.18 Conversion Rate Optimization (CRO)

- 3.19 Core Update

- 3.20 Core Web Vitals

- 3.21 Correlation

- 3.22 Crawl Budget

- 3.23 Crawl Error

- 3.24 Crawler

- 3.25 Crawling

- 3.26 CSS

- 3.27 Customer Journey

- 4 D

- 5 E

- 6 F

- 7 G

- 7.1 Google

- 7.2 Google Analytics

- 7.3 Google Bomb

- 7.4 Googlebot

- 7.5 Google Dance

- 7.6 Google Hummingbird

- 7.7 Google Panda Algorithm

- 7.8 Google Penguin Algorithm

- 7.9 Google Pigeon Update

- 7.10 Google RankBrain

- 7.11 Google Sandbox

- 7.12 Google Search Console

- 7.13 Google Search Quality Rater Guidelines

- 7.14 Google Trends

- 7.15 Google Webmaster Guidelines

- 7.16 .gov Links

- 7.17 Gray Hat

- 7.18 Guest Blogging

- 8 H

- 9 I

- 10 J

- 11 K

- 12 L

- 12.1 Landing Page

- 12.2 Latent Semantic Indexing (LSI)

- 12.3 Lead

- 12.4 Link

- 12.5 Link Bait

- 12.6 Link Building

- 12.7 Link Equity

- 12.8 Link Farm

- 12.9 Link Juice

- 12.10 Link Profile

- 12.11 Link Stability

- 12.12 Link Velocity

- 12.13 Links, Internal

- 12.14 Links, NoFollow

- 12.15 Links, Outbound or External

- 12.16 Log File

- 12.17 Log File Analysis

- 12.18 Long-Tail Keyword

- 13 M

- 14 N

- 15 O

- 16 P

- 17 Q

- 18 R

- 19 S

- 19.1 Schema

- 19.2 Scrape

- 19.3 Search Engine

- 19.4 Search Engine Marketing (SEM)

- 19.5 Search Engine Optimization (SEO)

- 19.6 Search Engine Results Page (SERP)

- 19.7 Search History

- 19.8 Share of Voice

- 19.9 Sitelinks

- 19.10 Sitemap

- 19.11 Sitewide Links

- 19.12 Social Media

- 19.13 Social Signal

- 19.14 Spam

- 19.15 Spider

- 19.16 Split Testing

- 19.17 SSL Certificate

- 19.18 Status Codes

- 19.19 Stop Word

- 19.20 Subdomain

- 20 T

- 21 U

- 22 V

- 23 W

- 24 X

- 25 Y

- 26 What are the different types of SEO content formats?

- 27 What is whitehat technique?

- 28 What are the two techniques of SEO?

- 29 What are the three main components of SEO?

- 30 Why is glossary important in a book?

Above the Fold

The content that appears on the website before the user scrolls down. In 2012, Google created a page layout algorithm to lower the rankings of sites with too many ads in that space.

AJAX

Asynchronous JavaScript and XML is a type of programming that allows a website to send and receive information from a server to dynamically change that page without reloading.

Algorithm

A complex computer program used by search engines to retrieve data and deliver query results. Search engines use a combination of algorithms to deliver web pages ranked through a results page based on a number of ranking factors and signals.

Algorithm Change

Some algorithmic changes go completely unnoticed. However, the impact of a major algorithmic change can usually be noticed quite quickly, although it sometimes takes weeks for the change to take effect. Algorithmic changes take three forms:

Alt Attribute

HTML code that provides information used by search engines and screen readers (for the blind and visually impaired) to understand the content of an image.

AMP

Accelerated Mobile Pages (AMP) is an open source HTML platform that enables faster display of a desktop page on mobile devices. It was used as a criterion to gain visibility in the Top Stories carousel.

The AMP logo has since been removed from search results, and Google has announced that AMP is no longer required to be displayed on Top Stories. The emphasis is now on Core Web Vitals to measure faster page delivery and loading, and the impact of AMP is now questionable.

Analytics

The science of collecting, analyzing, and interpreting data in order to take future action based on what has worked (or has not worked) in the past.

Anchor Text

Clickable link word or words. This text is intended to provide people and search engines with contextual information about what the linked page or site is about. For example, if you were creating a link to send visitors to the Search Engine Log, “Search Engine Log” is the anchor text.

Artificial Intelligence (AI)

The science of computers doing tasks that require human intelligence. Rather than following a set of pre-programmed rules (such as an algorithm), the AI computer system is basically a digital brain that learns. Artificial intelligence can also make and make decisions without human intervention.

A combination of signals used by search engines to evaluate sites and websites for ranking purposes.

Authority is the concept of using the reputation and credentials of the person who writes online content as a ranking factor. Originally, Google tested this in conjunction with Google+, but despite several patents pending, no evidence supports authority as a ranking factor.

Google is putting emphasis on EAT to reduce online misinformation, especially in YMYL niches such as health and finance. In these spaces, author authority building is recommended to keep the brand credible, even if ranking is not currently affected.

B

B2B

Short for business-to-business. In B2B SEO, the purchasing cycle is longer, products and services are more expensive, and the recipients are professional decision-makers.

B2C

Abbreviation from company to consumer. In B2C SEO, the purchasing cycle is usually shorter (although it still varies by industry), products and services are (mostly) cheaper, and the audience is the audience.

Backlink

Baidu

The most popular search engine in China, Baidu, was founded in January 2000 by Robin Li and Eric Xu.

Bing

The name of the Microsoft search engine. Bing was launched in June 2009, replacing Microsoft Live Search (formerly MSN Search and Windows Live Search). As of 2010, Bing operates Yahoo’s Organic Search Results as part of an agreement that Microsoft and Yahoo entered into in July 2009.

Black Box

A complex computer program that is poorly understood. Inputs and outputs can be observed, but the process itself cannot be accessed due to its confidential nature. For example, Google’s algorithm is a black box.

Black Hat

Risky tactics that go against Google’s Webmaster Guidelines.

Blog

Publication of content sorted in chronological order with the most recent content at the top. Content reflects personal or corporate interests and may be written by an individual or group of associates. Blogs were originally called web logs or internet blogs. However, since “internet log can also refer to server log files, the term was misleading.” To avoid this confusion, the acronym “blog” has become a common term.

Bounce Rate

The percentage of website visitors who leave a site without visiting another page on that site. Bounce rates vary widely by industry and niche. While a bounce rate may indicate a potential problem with your content or website, it’s not a direct ranking factor, according to Google.

Bot

Branded Keyword

When a user’s query contains an exact match or variation of a specific company or brand name. For example, “Search Engine Journal”, “SEJ”, “SearchEnginejournal.com”, and “Search Engine Journal SEO 101 Guide” are some examples of branded keywords.

A navigation element that helps users easily see where they are on your site.

Broken Link

The link was not found, leading to the 404 error. Typically, the link is broken when:

C

Cache

Technology that temporarily stores web content such as images to reduce future page load times.

Cached Page

A snapshot of the website as it appeared when it was last indexed by the search engine.

Canonical URL

An HTML code element that specifies the site’s preferred URL when multiple URLs have the same or similar content to reduce duplicate content. Also known as canonization.

ccTLD

Top-level domain with country code. For example, a UK based company would have a domain like this: www.example.co.uk where uk is ccTLD.

Citation

In local SEO, a quote is any online mention with a brand name, address, or telephone number (NAP). The quotes are usually found in directories, social networks and community profiles, site resource lists, or any brand mention on the Internet that does not link to the site. NAPs can affect ranking and visibility on Google maps.

Click Bait

Content designed to entice users to click, usually by over-promising or intentionally misleading headlines so publishers can monetize their ads.

Click Depth

Click depth is the number of clicks it takes to move from the home page or entry page to the landing page on your site. The more clicks you need, the less likely Google is to index or rank your page.

The pages closest to the home page are considered the most authoritative and most likely to be searched and indexed by Google.

Click depth is important for smooth page indexing and link capital flow; therefore it indirectly influences the ranking.

Click-Through Rate (CTR)

The speed (expressed as a percentage) at which users click on the free search result. This is calculated by dividing the total number of free clicks by the total number of impressions and then multiplying by 100.

Cloaking

Showing different content or URLs to people and search engines. Violation of Google’s Webmaster Guidelines.

CMS

Means a content management system. A web application that allows you to create, transfer, and manage digital assets.

Co-Citation

How often two sites (or websites) are mentioned together by a third party site, even though the first two items do not link (or reference) each other. This way, search engines can determine the thematic similarity.

For example, imagine the Search Engine Journal and Search Engine Roundtable never linked together or mentioned each other. However, other websites and blogs would likely list both SEJ and SER in popular search engine news publication lists.

To see this in action see: related: https: //www.searchenginejournal.com/ search log

Code To Text Ratio

The amount of text displayed on a page compared to the amount of code used to construct the page is called code-to-text ratio. A higher text-to-code ratio is considered to provide a better user experience but is not a direct ranking factor.

Poorly written comments, often off-topic and self-promotional, posted by spambots in the hope of getting a free (but ultimately worthless) link.

Competition

There are two types of competition:

Content

Content is King”

A phrase often used by conference speakers and authors of popular SEO (and digital marketing) publications. In this context, “content is king” usually means that content is essential to any SEO, digital marketing, or business success.

This sentence actually comes from Bill Gates’ essay “Content is King,” published January 3, 1996.

Conversion

When the user performs the requested action on the site. Conversion examples include:

Conversion Rate

The speed (expressed as a percentage) at which site visitors perform the desired action. It’s calculated by dividing total conversions by traffic, then multiplying by 100.

Conversion Rate Optimization (CRO)

The process of improving the number or quality of conversions that occur on a website. Some popular CRO tactics include testing changes to the page design, texts, images, price, call to action, and messages.

Core Update

When Google is making extensive updates to its core algorithm. Google sometimes announces a specific theme for its updates, such as the Page Experience update, but core updates are non-specific and happen several times a year.

Core Web Vitals

A set of metrics that measure the performance of a website in relation to the user experience. Core web metrics were introduced with the Page Experience update as the main signals for a good user experience:

Google confirmed Core Web Vitals as a ranking factor, but said relevance and other factors may be more important.

Correlation

The degree to which there is a relationship between two or more items. Often used in SEO research to infer variable relationships in search rankings due to the nature of black box algorithms. However, always remember that the correlation is causation.

Crawl Budget

The total number of URLs that search engines can and are willing to crawl on your site over a specified time period.

Crawl Error

Crawler

The program that search engines use to index the web. Bots visit websites to gather information and add or update the search engine index.

Also known as: Bot, Spider, Web Crawler

Crawling

The process of gathering information by the crawler from billions of public web pages to update, add and organize web pages in the search engine index.

CSS

Cascading style sheets describe how HTML elements (e.g. color, fonts) should appear on web pages and adapt to display on different devices.

Customer Journey

All the potential moments (or touchpoints) where a potential customer comes into contact with or interacts with the brand. All of these interactions are designed to ultimately persuade, influence, and transform a potential customer into a customer, customer, or subscriber.

While the customer journey can vary greatly depending on the type of business and industry, it typically consists of four main “steps”:

Awareness & gt; Consideration & gt; Decision & gt; Stopping

Google’s Avinash Kaushik offers an alternative framework:

See & gt; Think & gt; Is & gt; Care

Also Known As: Purchase Process, Consumer’s Path To A Decision, Customer Path To Online Purchase, Marketing Path, To Buy Path, Purchase Path

D

Data

All the hard numbers representing real customers – who, what, where, when, why, and how – are all needed to make informed decisions about SEO strategies and tactics.

Dead-End Page

A website that does not link to any other website. It’s called so because once a user or bot shows up on that page, there’s nowhere to go any further.

Deep Link

Deep Link Ratio

When an internal link points directly to a page other than the home page on your site, it is called a precision link. The deep link to link ratio of your homepage is known as the deep link ratio.

Having links directly to deep pages on your site is considered to be an indication of the quality of the content on the site. The more precise links you have, the better the website is. There is no evidence that the deep link ratio has any direct impact on the ranking.

De-index

When Google removes a site or website, temporarily or permanently, from search results, in particular from the search index. Google provides a tool to remove URLs in Search Console for voluntary cases; however, a site may also be removed from the index as a penalty for violating the Google Webmaster Guidelines by manual action.

Direct Traffic

In Google Analytics, users who go directly to your website by typing a URL directly into a browser or clicking a bookmark are referred to as direct traffic. Google will also include any traffic sources it does not recognize in direct traffic.

Directory

List of websites, usually categorized by related categories and maintained by editors. Depending on the directory, the inclusion may be free or paid. In the past, links from directories (eg DMOZ) were highly searched for, leading to widespread abuse and a general devaluation of this type of link building.

Also known as: web directory, link directory

Disavow

If your link profile contains a large number of spammy, artificial or low-quality inbound links that may be detrimental to your rankings – and you cannot remove them for good cause (e.g. the link exists on the site you have no control over it) – you can use the Disavow Tool Google to tell Google to ignore these links.

DMOZ

Open Directory Project. This human-edited website directory was launched on June 5, 1998 and closed on March 17, 2017.

Do-follow

A link that does not use the “nofollow” attribute. In other words, a link.

Domain

Website address – usually ending with an extension like .com, .org, or .net. For example: www.searchenginejournal.com is the domain of this website.

Domain Age

The domain registration date, until today, is called the age of the domain. For example, Search Engine Journal was registered on June 10, 2003, so it has a significant domain age.

It was once thought that a higher domain age would give a domain more authority, but this idea of domain age as an influence on ranking has been rejected.

Domain History

Every activity, including backlinks and website built previously on the domain, is known as the domain history.

If the previous site on the domain received a penalty, it will remain connected to the domain and cause problems for the new owner.

It is recommended that you always check your domain history before purchasing a domain.

Doorway Page

Web pages that are created for the purpose of positioning in search engines for specific keywords with the sole purpose of redirecting users who clicked that page to another site.

DuckDuckGo

A search engine that was founded on September 28, 2008. It is often praised for its emphasis on user privacy and the lack of filter bubbles (search personalization). DuckDuckGo uses over 400 sources to handle its search results, including vertical search engines, its own crawler, DuckDuckBot, Bing, and Yandex. There were 4 billion searches on DuckDuckGo in 2016.

Duplicate Content

When a significant amount of content on one website matches or is incredibly similar to content that exists elsewhere on the same site, or on a completely different site.

Dwell Time

The amount of time between the user clicking on a search result and the return to the SERP from the website. A short residence time (e.g. less than 5 seconds) may indicate to search engines that the quality of the content is poor.

E

E-A-T

Expertise, authoritativeness and credibility are concepts taken from the Google Search Quality Evaluator guidelines and became known as E-A-T.

E-A-T represents the signals used by Google to determine content quality, but it is not a direct ranking factor.

Google is committed to stopping the spread of disinformation, especially from sites operating in Your Money Your Life (YMYL) niches, such as finance or health.

E-commerce

Buying and selling products, all online.

Editorial Link

A link that is passed from one site to another without the recipient asking or paying.

.edu Links

Educational institutions have a .edu Top Level Domain (TLD). For example, stanford.edu. A link from such a page is called a .edu link.

Links from .edu sites were considered “hard to come by” and were believed to be of greater link building value. As a result, link writers were targeting .edu links until many of the lesser-known .edu sites were devalued by Google and any benefits of links were ignored.

Engagement Metrics

Methods for measuring user interaction with websites and content. Examples of engagement rates include:

Entities

People, places, organizations, websites, events, groups, facts, and more.

External Link

F

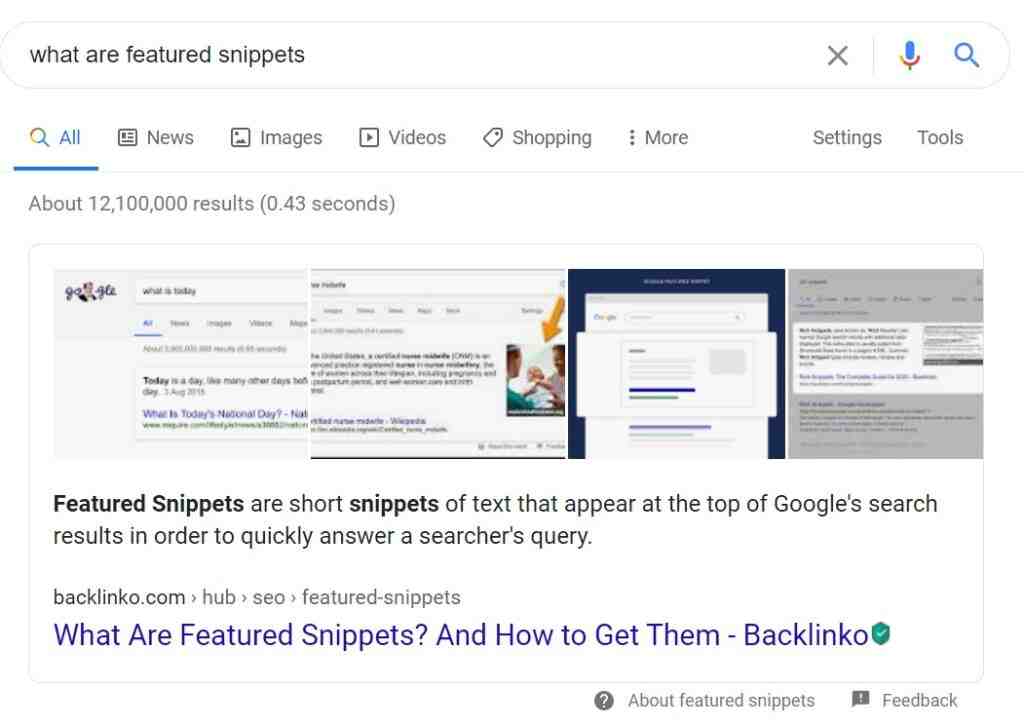

Featured Snippet

For some queries, usually questions (i.e. who / what / where / when / why / how), Google sometimes displays a special block over organic search results. This field contains a summary (as a paragraph, list, table, or video) as well as the publication date, page title, link to the website from which the reply originated, and the URL.

Findability

How easy it is to detect content on a page, both internally (by users) and externally (by search engines).

First Link Priority

The concept of internal linking is that Google treats links differently if there are two links on the web page pointing to the same page. Google was thought to consider the anchor text from the first link to have a greater impact.

There is no conclusive evidence of how Google treats the same link on a page. When linking to a page, it is recommended that you do this first for the user and apply the link to the anchor text where relevant.

Links that appear at the bottom (or “footer) of the webpage”.

Freshness

Freshness refers to the age of the content posted on the Internet.

Google is believed to be pBlan-kriority on fresh content in certain niches for certain search queries depending on certain factors. For example, searches related to COVID announcements or sports results.

Inquiry Deserves Up to date (QDF) is part of the Google algorithm that determines when a query should show up-to-date information, especially for breaking news, recurring events, information inquiries and product inquiries.

G

Search engine founded by Larry Page and Sergey Brin in September 1998. Google marked a radical move away from human-edited web directories by relying on web indexing technology and a complex algorithm to analyze hyperlink patterns to rank your sites. Google is the most used search engine in almost every country in the world.

Google Analytics

A free web analytics program that can be used to track audience behavior, traffic sources, content performance, and more.

Google Bomb

The practice of making a site number one for a surprising or controversial search phrase. This has been achieved with a large number of sites linking to a specific website with specific anchor text to help it rank for that term.

For example, in 2003, the White House biography of President George W. Bush ranked first in the search for “unhappy failure.”

Googlebot

A web indexing system used by Google to find and add new sites and web pages to its index.

Google Dance

The term has been used since 2002 for the unstable period when Google updated its search index approximately every month.

Google Hummingbird

The Google search algorithm that was officially announced in September 2013 after having been used for a month. Hummingbird’s goal was to better understand the full context of the queries (i.e. semantic search) rather than specific keywords to provide better results.

Google Panda Algorithm

A major update to the Google algorithm that was initially rolled out in February 2011, followed by numerous subsequent updates. Google Panda’s goal was to reduce the visibility of low-value content, often created by ‘content farms’. In 2016, Panda became part of Google’s core ranking algorithm.

Google Penguin Algorithm

Google’s main algorithm that was launched in April 2012, followed by a series of updates and refreshes. Penguin’s goal was to reduce the visibility of over-optimized sites or sites abusing certain spam tactics (e.g., low-quality linking, keyword stuffing). In 2016, Penguin began operating in real time as part of Google’s core algorithm.

Google Pigeon Update

The name (given by the SEO industry, not Google) of a major update to Google Local Search, which was introduced on July 24, 2014. Pigeon’s goal was to increase the accuracy and relevance of local searches by using more traditional Google ranking signals and improving distance and locating ranking parameters.

Google RankBrain

A major shift to Google’s algorithm was officially introduced in October 2015, although it had been tested many months before. Thanks to RankBrain, Google added machine learning to its algorithm and was named the third most important ranking signal. In June 2016, it was revealed that RankBrain was involved in every query and is influencing the rankings.

Google Sandbox

The theorized and debated (but never endorsed by Google) “waiting period” that prevents new sites from getting the full benefit of their optimization efforts. Typically, this effect is most commonly seen with new sites targeting competing keywords, and can only be overcome if the site gains sufficient authority.

Google Search Console

Google Search Console offers several helpful features, including the ability to monitor sites for crawl errors and site speed. These pages are also used to provide notifications about manual actions.

Google Search Quality Rater Guidelines

Google uses a guidance document for its internal Quality Controllers that can be referred to when manually reviewing websites.

The original internal document was confidential, and then Google publicly published its Search Quality Assessment Guidelines online, which are updated from time to time.

The information in the document is a guide to creating high-quality content and incorporates the concept of an EAT. The guidelines are not a list of any direct ranking factors.

Google Trends

A site where you can view data visualizations about the latest search trends, articles, and topics.

Google Webmaster Guidelines

Google’s guidelines for good website optimization practices as well as “illegal” practices that may result in manual actions. Just:

.gov Links

Government organizations have a .gov top level domain (TLD). For example, usa.gov. A link from such a site is known as a .gov link.

Only US government entities can apply for and obtain a .gov TLD. Other countries have their own national version, for example .gov.uk.

Government TLDs are highly regulated and trusted sources of information. For this reason, a link from the .gov domain is considered to be of significant value and is the target of sending spam.

Gray Hat

A supposed “gray” area between techniques following Google’s Webmaster Guidelines, but then add an element that bends the rules a bit.

Guest Blogging

A popular link building tactic that involves creating content for other websites in exchange for a backlink pointing to your own pages.

H

Heading

The HTML header tags (H1-H6) divide the content into sections based on importance, with H1 being the most important and H6 being the least important. Headline tags should be used naturally and should include your target keywords where relevant as this can provide a small SEO benefit.

Headline

Head Term

A popular keyword with lots of searches that are usually difficult to rank for.

Also Known As: head keyword, short tail

Any text that the user cannot see, which aims to manipulate search rankings by loading web pages with content-rich keywords and copying. This technique is contrary to Google’s Webmaster Guidelines and may result in manual operation. For example, adding text that is:

Hilltop Algorithm

Influenced by the HITS algorithm and added to the Google algorithm in 2003, Hilltop has assigned “expert” status to certain sites or websites posted on a specific topic that also link to unrelated pages on that topic.

HITS Algorithm

Hyperlink-induced topic search is a link analysis algorithm that evaluates value not only from content and incoming links (authorities), but also outgoing links (hubs).

Homepage

The default or introductory web page of a website.

.htaccess File

A server configuration file that can be used to rewrite and redirect URLs.

HTML

Denotes a hypertext markup language. HTML tags are specific code elements that can be used to improve SEO performance for websites and websites.

HTTP

Hypertext Transfer Protocol is a way of transferring data from a computer server to a web browser.

HTTPS

Hypertext Transfer Protocol Secure uses the Secure Sockets Layer (SSL) protocol to encrypt data sent between a website and a web browser. HTTPS is a minor Google ranking factor.

Hub Page

An authoritative central resource (e.g. a page or article) dedicated to a specific topic (keyword) that is constantly updated and linked and leads to related web pages.

I

Inbound Link

Link to a website that comes from an external website. For example, if the Search Engine Journal were to link to Google, this would count as an inbound link on the Google side; if Google were to link to the Search Engine Journal, it would be an incoming link on the SEJ side.

Index

Search engines use databases to store and retrieve information gathered during the indexing process.

Indexability

How easily a search engine bot can understand and add a page to its index.

Indexed Page

The website detected by the crawler has been added to the search engine’s index and may appear in the search results for relevant queries.

Information Architecture

How the website is organized and where the different content and navigation elements are located on the website.

Information Retrieval

The process of finding information (e.g. text, images, video) from a large database and then presenting the most relevant information to the end user.

Internal Link

IP Address

Internet protocol address. IP addresses can be:

Neither of them will help you get a better ranking; however, a dedicated IP address can increase the speed of your website.

J

JavaScript (JS)

A programming language that allows you to dynamically insert content, links, metadata, or other elements into web pages. JavaScript has the potential to make it difficult for search bots to index and index web pages and increase page load times for users.

K

Keyword

A word, words or phrase that an SEO specialist or marketer is targeting to match and rank according to what users are looking for. Words used on web pages can help search engines determine which pages are most relevant to appear in organic search results when a searcher enters a search query. Keywords usually represent topics, ideas or questions.

Keyword Cannibalization

A type of self-competition that occurs when multiple pages from one site are ranked for the same query on the SERP. This can result in lower CTR, less authority, and lower conversion rates than if you had one consolidated website that ranked well.

Keyword Density

How often a word or phrase appears in the body of the webpage. At best, this unproven concept is outdated if it ever had any relevance to search engines. There is no ideal percentage that would improve a page’s rank.

Keyword Research

The process of discovering any relevant topics, topics, and terms entered into the search engines, and the magnitude and level of competition for those terms. This practice is made possible by various free and paid tools.

Keyword Prominence

When a keyword is placed as high on a website as possible to influence the ranking of the search term.

Using a keyword inserted at the beginning of a page, for example in the first paragraph, sends a strong signal to Google about the page.

Keyword exposure acts as a ranking signal if the page’s theme is relevant to the keyword.

Keyword Stemming

In language and grammar, words are constructed around stem or stem variations. For example, shopping, shopping, stores are all varieties of the core “store”.

When trying to rank for a term such as “store”, using variations of that word on the page (purchases, purchases) will be treated by Google as the same keyword. This also applies to plural such as bicycles / bikes or fly / flies.

The origin of keywords is Google’s ability to understand the variations of a keyword and is part of its algorithm.

Keyword Stuffing

Adding irrelevant keywords or repeating keywords outside the natural to a website in the hope of increasing search ranking. This spam tactic is contrary to Google’s Webmaster Guidelines and may result in manual action.

Knowledge Graph

The entity database that Google uses to reveal facts and information about people, places, and things (or entities) – and their combinations – in the Knowledge Panel or carousel at the top of the search results for relevant queries.

Knowledge Panel

The field that appears at the top or on the right rail (computer only) of page 1 of Google search results for relevant queries. This panel contains facts and information about people, places, and things, as well as links to related sites or Google searches.

KPI

Indicates a key performance indicator. The measurement method companies use to measure whether their marketing and business goals are being met.

L

Landing Page

Latent Semantic Indexing (LSI)

An information retrieval method designed to help search engines identify the correct context of a word. LSI doesn’t play a useful role in SEO these days.

See: Google semantic latent indexing

Lead

A person who may or may not be interested in your product (s) and / or service (s). The prospective customer is willing to provide his email address (and usually other personal or contact details) in exchange for something he deems valuable from the website.

Link

A connection between two websites built with HTML. The link allows users to go to websites, social networks and applications. Links play a crucial role in the search engine ranking and ranking of sites.

Link Bait

Deliberately provocative content to grab people’s attention and attract links from other websites.

Link Building

The process of acquiring links to other trusted and relevant websites to improve your rankings and visibility in organic search results. Link building can be done by:

Link Equity

The value of inbound links for relevance, authority, and trust.

Link Farm

When a group of sites connects together, usually through automated programs, in the hope of artificially increasing search rankings. Spam tactics.

Also known as: Link Network, Blog Network, Private Blog Network

Link Juice

A term that should never be used in public or on the Internet.

Did you mean…: Authority or PageRank

Link Profile

Any type of link that points to a specific website. The quality of your site’s link profile can vary greatly depending on how you acquired them and the anchor text used.

Link Stability

When the link remains on the site for a certain period of time without changes or updates.

Google applied for a patent that dealt with the link opt-out and the frequency of link changes on a page, but there was no evidence that link stability had any effect on ranking.

Link Velocity

How fast (or slow) the site accumulates links. A sudden increase in your link speed could potentially be a sign of spamming, or it could be caused by viral marketing or by doing something new to publish (whether intentionally or unintentionally).

Links, Internal

Links from one page to the other in the same domain. Internal links can be inserted on the page, in the main navigation menu, or in the sitemap.

Internal links can be used to indicate the importance of a page on your site. For example, a page that is directly linked from the home page, compared to a page that is linked to four clicks from the home page.

Internal links are important because Googlebot’s crawlers use internal links to navigate your site and find new pages.

Links, NoFollow

In a hyperlink, rel = “nofollow” is an attribute added to the link to show that you are not giving any credit or endorsement to the page you link to

Nofollow was originally introduced by Google to curb comment spam and devalue all nofollow links, but have since changed the way the directive works.

Nofollow is now considered a “tooltip” meaning they can still use some information about linking patterns, but generally accept that no weight should be passed over the link.

Links, Outbound or External

An external or outgoing link is a link from a page in domain x to a page in domain y. For example, a link from searchenginejournal.com/google to google.com/about-us

External links to authority sites have been found to add value to the page as it shows that the site is well researched and uses trusted sources.

External links are not considered to have any influence on the ranking and should only be used to cite sources where appropriate.

Log File

A file that records information about users such as IP addresses, browser type, Internet Service Provider (ISP), date / time stamp, referring / exit pages, and number of clicks.

Log File Analysis

The process of mining log file data to identify trends, administer the site, track user traffic to the site, gather demographic information, and understand how search bots index the site.

Long-Tail Keyword

M

Machine Learning

A subset of artificial intelligence where the system uses data to learn and adapt a complex process without human intervention.

Manual Action

Google term for penalty. Google will take manual action on the site after a verifier (i.e. a Google employee) has manually checked the site to confirm that it does not comply with Google’s Webmaster Guidelines. Punished websites can be degraded or completely removed from the search results. Manual actions can be assessed on the entire site or only on certain websites.

Meta Description

A tag that can be added to the “header section of an HTML document.” It acts as a description of the website content. This content is not used in ranking algorithms, but is often displayed as “the snippet that appears in search results.” Accurate and engaging descriptions can increase your organic click-through rate.

Meta Keywords

A tag that can be added to the “header section of an HTML document.” Adding a few keywords here will not help you rank – search engine algorithms have ignored this tag for years for ranking purposes due to abuse (in the form of keyword stuffing).

Information that appears in a website’s HTML source code to describe its content to search engines. The title tag and meta description are the most common types of meta tags in SEO.

Metric

A method of measuring activity and performance to evaluate the success (or lack thereof) of an SEO initiative.

N

Natural Link

Negative SEO

A rare but malicious practice in which Internet spam techniques are used to harm the search rankings of another site, usually a competitor.

Niche

A defined market or area of interest consisting of a small group of people with great passions.

Noarchive Tag

Meta tag telling search engines not to cache a copy of your page.

Nofollow Attribute

A meta tag that tells search engines not to use one particular outbound link. This happens in cases where a website does not want to transfer permissions to another website or because it is a paid link. The nofollow attribute looks like this:

& lt; a href = “http://www.example.com/” rel = “nofollow” & gt; Here is the anchor text & lt; / a & gt;

Noindex Tag

A meta tag that tells search engines not to index a specific web page in their index.

Nosnippet Tag

Meta tag telling search engines not to display a description with your listing.

“(not provided)”

Following the transition of search engines to safe search in 2011, keyword data was removed from Google Analytics and replaced with the word “(not specified)” – making it impossible to determine which queries were responsible for visitors finding the page.

O

Off-Page SEO

Demand generation and brand awareness activities that take place off-site. In addition to link building, promotional tactics can include social media marketing, content marketing, email marketing, influencer marketing, and even offline marketing channels (e.g. TV, radio, billboards).

On-Page SEO

All these activities take place on the website. In addition to posting relevant, high-quality content, on-page SEO includes HTML code optimization (e.g., title tags, meta tags), information architecture, site navigation, and URL structure.

Organic Search

Natural or free auctions that appear on the SERP. The free search results that are analyzed and classified by algorithms are designed to provide users with the most relevant results based on their query.

Orphan Page

Any website to which no other page on this site links.

Outbound Link

A link that takes visitors to a page on a different site than the one they’re currently on.

P

PageRank

According to Google: “PageRank is a measure of the importance of a page based on inbound links from other sites. Put simply, each link to a page on your site from another site adds to that site’s PageRank. Not all links are created equal. The algorithm is named after Google co-founder Larry Page.

Page Speed

The time it takes for the website to load completely. Page speed is a ranking factor.

Pageview

The website is loaded in the browser.

Paid Search

Pay-per-click ads that appear above (and often below) organic search engine results.

PBN

Means a network of private blogs.

Indicates a Portable Document Format file. PDF files can contain text, images, links, videos, and more.

Penalty

Persona

A fictional representation of the ideal site visitor or customer – their demographics, behavior, needs, motivations and goals – all based on real data.

Also known as: Customer Persona, Marketing Persona

Personalization

When search engines use your search history, web browsing history, location, and relationships to create a set of search results tailored to a specific user.

PHP

Hypertext Preprocessor is a scripting language used to create dynamic content on web pages.

Piracy

Search engines are designed to lower the organic search rankings for infringing content. In 2012, Google introduced a filter to reduce the visibility of sites reported to multiple DMCA takedown requests.

Pogo-sticking

When, after entering a query, the search engine jumps back and forth between the SERP and the pages listed in those search results.

Position

PPC (Pay Per Click)

An ad type where advertisers are charged a certain amount (usually determined by bid, relevance, account history, and competition) each time a user clicks on the ad. The combination of PPC and SEO can result in more properties, clicks, and SERP conversions. In addition, PPC data can inform you about your SEO strategy, and vice versa.

Q

QDF

Query posts deserve freshness as a search engine may choose to display newer web pages in search results (rather than older pages) if a particular search term is gaining ground, possibly because an news event has caused a spike in searches for that topic.

Quality Content

Content that helps you successfully achieve your business or marketing goals (e.g. generating organic traffic or social campaigns, winning top positions in search results, generating leads / sales).

Quality Link

Incoming link from a reliable, relevant, or trusted website.

Query

A word, word, or phrase that the user enters into a search engine.

R

Rank

Where the website appears in organic search results for a specific query.

Ranking Factor

A single component that constitutes a complex series of algorithms that determine where websites with organic search results for a specific query should appear. For years, Google has said its algorithms “rely on more than 200 unique signals” to help users find the most relevant website or answer.

Reciprocal Links

When two websites agree to exchange links with each other.

Redirect

A technique that directs a user (or search engine) who requested one website to another (but equally relevant) website. There are two types of redirects:

Referrer

URL data that identifies the source of the user’s website request.

Reinclusion

The process of asking a search engine to return a site or web pages to a search index after the indexing is removed.

Relevance

The way search engines measure how closely related the content of a website is to suit the context of the query.

Reputation Management

The practice of creating a positive perception of a brand or person on the internet – including in search results and on social media – by minimizing the visibility of negative mentions.

Also Known As: Online Reputation Management, Public Relations

Responsive Website

A website designed to automatically adapt to a user’s screen size, whether viewed on a desktop computer or a mobile device.

Rich Snippet

Structured data can be added to your website’s HTML to provide search engines with contextual information as they are indexed. This information can then be displayed in the SERP, resulting in an extended list, known as an extended fragment.

robots.txt

The Robots Exclusion Protocol (or Standard) is a text file available at the root of your site that tells search engine crawlers which areas of your site should be ignored.

Return on Investment (ROI)

A way to measure the effectiveness of SEO activities. This is calculated by dividing the organic search revenue by the total investment cost, then multiplying by 100.

S

Schema

A form of microdata that, when added to a web page, creates an extended description (commonly known as an extended snippet) that appears in search results.

Scrape

A technique used to copy content or information on a website by means of a computer program or script. Search engines such as Google collect data to build a searchable index of websites.

Search Engine

A computer program that allows users to enter a query to retrieve information (e.g. files, web sites, web pages) from that program’s index (i.e. a web search engine such as Google indexes web sites, web pages, and files found on the World Wide Web) . The search index is created and updated by the crawler, and the items are analyzed and classified by a series of algorithms.

See also: Baidu, Bing, DuckDuckGo, Google, Yahoo, Yandex

Search Engine Marketing (SEM)

A general term for increasing your site’s visibility on search engine result pages, covering both paid and organic activities.

Search Engine Optimization (SEO)

The process of optimizing a website – as well as all content on it – so that it appears in prominent positions in organic search engine results. SEO requires understanding how search engines work, what people are looking for (i.e. keywords and key phrases), and why people are searching (intention). Effective SEO makes your website attractive to users and search engines. It is a combination of technical (SEO on-page) and marketing (SEO off-page) aspects.

Search Engine Results Page (SERP)

The page search engines are shown to users after performing a search. Typically, search engines display around 10 organic search results, sorted by relevance. Depending on your query, other search features may appear, including:

Also known as: SERP when it relates to multiple pages of search results.

Search History

Search engines track every user’s search (text and voice), every webpage they visit, and every ad they click on. Search engines can use this data to personalize the results for logged in users.

Also Known As: Web browsing history.

How many impressions a brand receives in the SERP for search terms compared to the total number of impressions a brand’s competitor receives for the same search terms.

Sitelinks

Up to six algorithmically selected links that appear below the same site listing in a top rated free search result. Pages can be blocked as sitelinks in Search Console or Bing Webmaster Tools.

Also known as: Deep Links (Bing).

Sitemap

List of pages on the site. There are two types of sitemaps:

Sitewide Links

A link that appears on every page of your site, usually in the sidebar or footer of blogs or sites that use templates.

Platforms (websites and applications) on which users can interact with each other and create, share and use content.

All the factors that prove the authority and influence on popular social networking sites. For example, the user’s social authority on Twitter.

While many correlation studies indicate that social signals do influence rankings (e.g., the number of likes / shares received for a given content), Google has publicly stated that social signals are not a direct ranking factor. Popular sites that engage with social media rank well for other reasons.

Spam

Spider

Split Testing

A controlled experiment in which two or more websites were compared to measure the effect of another variable on conversions. After your pages have been displayed long enough, a “winner” can be announced to your site visitors to collect the appropriate amount of performance data.

SSL Certificate

A digital certificate used to authenticate a site’s identity and encrypt information sent to the server using Secure Sockets Layer technology.

Status Codes

Response codes sent by the server when you click a link, request a web page or file, or submit a form. Common HTTP status codes important for SEO:

Stop Word

A frequently used word. For example: a, w, for, is, z, w, w. Search engines have ignored these words in the past to save time / resources in indexing. Search engines have evolved a lot from the start, and stop words do matter at times, so there’s nothing to worry about for SEO purposes.

Subdomain

A separate section that exists in the root domain. For example: http://jobs.searchenginejournal.com/ is a subdomain that exists in the main domain https://www.searchenginejournal.com/

T

Taxonomy

Organizing and categorizing your website to maximize content finding and assisting users in performing their desired site tasks.

Time on Page

An inaccurate estimate of the time a user spends browsing a specific website. Pages with high exit rates can significantly distort this data.

Title Tag

HTML meta tag that acts as the title of the website. Typically, the title tag is the title used by search engines when displaying their search listings, so it should contain strategic and relevant keywords for that particular page. The title tag should also be written to make sense for people and attract as many clicks as possible. Typically, title tags should be less than 65 characters long.

Top-Level Domain (TLD)

Extension of the given web address. They include:

There are also many other industry and country specific options.

Also Known As: gTLD (generic top-level domain); Domain extension.

Traffic

People (and sometimes bots) who visit your website.

Trust

It generally relates to the history of the domain (e.g. whether it cites or includes expert sources, builds a positive reputation, follows the Webmaster Guidelines).

TrustRank

A link analysis technique to separate good “reputable source pages” from Internet spam.

U

User-Generated Content (UGC)

Any form of content – videos, blog posts, comments, reviews, etc. – created by users or customers.

Universal Search

When search engines take data from multiple specialized databases to display them in the same SERP. Results can include images, videos, messages, purchases, and other types of results.

Unnatural Link

Any links that Google finds suspicious, deceptive, or manipulative. An unnatural link could cause Google to take manual action on your site.

URL

The Uniform Resource Locator is a specific string of characters that points to a resource on the network. The term URL is usually an abbreviation for a letter-based web address (e.g. www.searchenginejournal.com) entered into a browser to access a website.

URL Parameter

Values added to the URL to track where the traffic is coming from (i.e. which link someone clicked to discover your site or website). Here is an example of a URL parameter (in bold):

https://www.searchenginenejournal.com/example-article-url/999999/? yjsource=share-back-traffic& yjmedium=desktop-share-button& yjcampaign=twitter

Usability

How easy it is for people to use your website. Website design, browser compatibility, disability enhancement, and other factors all play a role in improving usability and making your website accessible to as many people as possible.

User Agent

User Experience (UX)

The general impression users leave after interacting with the brand, its presence on the Internet and its products / services.

V

Vertical Search

A specialized type of search where the focus is only on a specific topic, type of content, or media. For example, YouTube (video), Amazon (shopping), Kayak (travel), Yelp (business reviews).

Virtual Assistant

A bot that uses natural language processing to perform tasks such as web searches. For example, Siri by Apple or Cortana by Microsoft.

Visibility

Visibility and positioning of the website in organic search results.

Voice Search

A type of voice-activated technology that allows users to speak to a device (usually a smartphone) to ask questions or perform an internet search.

W

Webpage

A document that exists on the web and can be viewed by web browsers.

Website

A collection of websites hosted together on the web.

How a website links its webpages to help visitors navigate through this website. Site navigation comes in several different forms, including:

Also Known As: Internal Links (or Internal Links), Site Architecture

Webspam

Any methods that exist with the sole purpose of cheating or manipulating search engine algorithms and / or users.

Also Known As: Black Hat SEO, Spam, Spamdexing, Search engine spam

White Hat

Tactics in line with Google’s guidelines for webmasters.

Word Count

Total number of words that appear in the copy of the content. Too small (or thin) content can be a signal of poor quality to search engines.

WordPress

A popular blogging and content management system.

X

XML

Extensible Markup Language is the markup language used by search engines to understand the data on your site.

XML Sitemap

A list of all pages on the site that search engines need to know.

Y

Yahoo

Yahoo was founded in April 1994 and was an extremely popular search engine and portal in the 1990s. Yahoo was mostly human driven, at least until June 2000, when an unknown search engine named Google began feeding into Yahoo’s organic search results. The deal lasted until 2004, when Yahoo started using its own search technology. As of 2010, Yahoo’s organic search results are powered by Microsoft’s Bing search engine.

Yandex

The most popular search engine in Russia, Yandex, was founded on September 23, 1997 by Arkady Volozh and Ilya Segalovich.

Featured image: Blank-k / Shutterstock

What are the different types of SEO content formats?

The most popular types of SEO content

- Content in abbreviated form.

- Content in a long form.

- Blog content.

- Pillar content and evergreen.

- Comprehensive content.

- Content for social channels.

- Data driven content.

- Mental leadership.

What are the three types of SEO? There are three types of SEO you need to get a good round of your organic search strategy: on-page positioning, technical positioning, and off-page positioning.

What is SEO format?

SEO Writing is a form of writing that helps web pages become more visible to the major search engines. A highly visible website with good content shows up in the first few pages of results when someone searches for that topic.

What is SEO document?

You can make sure that the right people are watching your content by making it easier to find your content. Search engine optimization or SEO is the process of increasing a website’s visibility on search engines in order to attract more relevant traffic.

Whats SEO stand for?

SEO stands for Search Engine Optimization and is the process used to optimize a website’s technical configuration, content relevance and link popularity so that its pages can be found easily, more relevant and popular in relation to user search queries and consequently search engines are expected to better evaluate them .

What is whitehat technique?

The White Hat SEO technique, on the other hand, refers to strategies that target a human audience that doesn’t try to break into the search engine’s algorithm. Any good SEO campaign will focus more on White Hat SEO, quality content, and link building as the core of their approach.

What are the GRAY Hat techniques? Gray hat marketing is a combination of widely accepted SEO techniques, such as paying someone to write an article for your website, and forbidden practices known as “black hat marketing”, such as hiding keywords on a page by making them the same color as the background.

What is an example of white hat optimization?

Examples of white hat SEO include: Offering high-quality content and services. Fast site loading and mobile friendliness. Use of descriptive, keyword-rich meta tags.

What is white hat optimization?

White hat SEO is a collection of approved search engine optimization tactics designed to increase a website’s position on the search engine result page (SERP). Search results that appear as a result of approved methods and not payments or scams are known as organic search results.

What is an example of black hat optimization?

Common examples of black SEO strategies include using invisible text, doorway pages, keyword stuffing, page swapping, or adding unrelated keywords to a page. Each of these techniques is defined below, with an insight into how they can harm any company’s website.

What is black hat techniques?

Black hat SEO is a practice that is contrary to the guidelines of search engines and is used to obtain a higher position for a site in search results. These unethical tactics do not solve the seeker’s problem and often result in penalties from search engines. Black hat techniques include keyword stuffing, cloaking, and the use of private link networks.

What is a black hat tool?

Black hat SEO refers to a set of techniques that are targeted only at the search engine results and not at people. The techniques used can range from spamming to completely malicious, all of which go against the guidelines set by the search engine.

What is black hat in digital marketing?

Black hat SEO is the practice of violating search engine guidelines in order to manipulate the SERP (search engine result page) in order to gain higher rankings. Marketers who use black hat SEO methods may face Google penalties, be wiped out from search engines, or see a total drop in ratings.

What is considered a black hat?

Black hat hackers are criminals who hack computer networks with malicious intent. They can also host malware that destroys files, holds computers hostage, or steals passwords, credit card numbers, and other personal information.

What are the two techniques of SEO?

SEO techniques fall into two broad categories which are White Hat SEO and Black Hat SEO.

What is SEO and what are SEO techniques? Search engine optimization (SEO) is the art and science of increasing your pages’ rank on search engines such as Google. Since search is one of the main ways to discover online content, higher search engine rankings can lead to increased traffic to your website.

What are the three main components of SEO?

We covered the three basic parts of SEO: Technical SEO, Content / Relevance SEO, and Promotion / Authorship SEO.

What are the 3 Cs of SEO? Simply put, the basics of SEO can be boiled down to the 3 C’s: content, code, and credibility.

What are the 3 most important on-page SEO factors?

In summary, the most important on-page SEO factors are well-written and original content, a good title and description tag, formatting with proper use of the header and subtitles, the use of images and their descriptions, and well-composed URLs that contain the most important keyword and are not too long.

Which is the most important on page SEO factors?

Meta tags are the most important on-page SEO factor. It consists of four basic elements: Meta Title, Meta Description, Meta Keywords and Meta Robots Tag.

What are the 3 main components of SEO?

There are three types of SEO you need to get a good round of your organic search strategy: on-page positioning, technical positioning, and off-page positioning. By breaking down your strategy and thinking of SEO as these three categories, it will be much easier to organize and execute your optimization plans.

What is SEO and its components?

SEO stands for “Search Engine Optimization”. Simply put, SEO helps you increase your online visibility on search engines like Google or Bing when potential customers search for keywords related to your business. The better your SEO is, the more visible you become, which increases your potential for customer visits.

Why is glossary important in a book?

While particularly important for technical translations and marketing content, the glossary provides the basis for consistent, high-quality translations of all kinds. Ultimately, a glossary not only saves you time and money, but also helps ensure the success of your brand across languages and cultures.

How important is it to provide a glossary of terms and a reference list? A list of key terms with their definitions will help to ensure that the correct terms are used consistently throughout the text. In the case of technical translation, it would be unacceptable to translate the word differently each time.

What is the purpose of glossary in a book?

Think of glossaries as being specific to your book. Glossaries are words combined with their definitions to make them look like a dictionary page. It’s so simple. What words and what definitions you put in depends a lot on what you write.

What is a glossary and what is it used for?

noun, plural glossaries. a list of terms from a specific subject, field or area of use with accompanying definitions. such a list on the back of the book explaining or specifying difficult or unusual words and phrases used in the text.

What is glossary explain with example?

A glossary definition is a list of words and their meanings. An example of a glossary is the alphabetical list of difficult words at the end of the book.

What does a glossary show?

A glossary of special, unusual, or technical words or phrases is an alphabetical list of words and phrases with their meanings, for example at the end of a book on a specific topic.